Consumer Reports is the most popular and most trusted name in general-population reviews, but how good are they with cars? The magazine and its annual car book have been both praised and critiqued for years; I did a large critique on Allpar one or two decades ago, and recently revisited it. Not to turn this into a metacriticism, but I there were problems with my critique of their critiques, too; maybe it was time to start fresh.

After acknowledging that I fully agree with their mission, and appreciate what they are trying to do, let’s start with problems specific to Consumer Reports, and then move on to problems that their peers also face.

The unique problems of Consumer Reports

There are essentially four issues with Consumer Reports itself:

1) Integrity

When CR people discovered my commentary at Allpar, they sent me a cease-and-desist letter, claiming that because they had trademarked the name, I could not use it at all. This is, as several lawyers quickly wrote in to inform me, balderdash. Jeep is also a registered trademark, and I can write as many bad things about Jeep as I like. It’s almost as though CR can’t take any criticism—which normally is a sign of an organization that knows it has issues.

The oil study. Consumer Reports does not take advertising; but that doesn’t mean it has untouchable integrity. Let’s look, for example, at their July 1996 oil study (I would have newer examples but I no longer subscribe, for obvious reasons).

Consumers Union (which publishes CR) had a laudable goal: to cut through the marketing of oil companies and see if pricey well-advertised oils were any better than cheap generic ones, and also to see how big the difference between “dino juice” (I know it’s not from dinosaurs) and synthetics was. Their method was, on the surface, sensible—though also wrong: they put their oils into New York City taxis. This would theoretically allow them to accelerate the wear on the oil, so they would not have to wait ten years for the study to finish. However, since the taxis run 24 hours a day, they never cool down, so one primary advantage of some oils—protection against cold starts—was never put into play.

To their chagrin, the researchers found that none of the oils was better than the others at stopping engine wear. They had the chance, then, to do a two-page spread on how hard testing is, and on how strong their integrity was—that they had put a huge amount of time and money into a study, but could not actually make any conclusions. Instead, they stated that any natural oil is interchangeable with any other, but that synthetics (which had fared no better) were still worth the extra money.

Another challenge to their integrity was their long-standing habit of noting, in just about every summary of cars with Chrysler’s four-speed automatic, long after the (quite real) problems had been resolved, how awful the transmission was. However, they did not do the same for Honda, whose Odyssey had severe transmission problems, or for Ford, whose four-speeds had similar issues. Indeed, in one annual summary, CR actually had a little sidebar on how the worst transmission they’d ever seen was actually the one in all those Fords—but a casual reader would never have known.

Another indication of integrity issues, from back in the 1990s, was shown when different brands of the same car would have different reliability ratings. That suggested, perhaps, that there were issues with specific options/trim packages/features, or maybe that the ratings had natural variance and the difference between one rating and the next was not particularly large—perhaps not significant. The magazine solved that by lumping the ratings together, which admittedly did increase the n for the vehicles involved.

2) Feedback loop and systemic bias

Consumer Reports surveys its own members, not the general public.

There are good and bad aspects of that. One benefit is that if car companies wish to influence the results, say by claiming they own their competitor’s car and it’s been horrible, they have to buy a subscription. Another benefit of sampling your own subscribers is cost reduction: it is cheaper to survey your own members than it is to acquire sample from a third party. CR can also have a decently high response rate—which I estimated, years ago (based on a Detroit News story), to be rough 5% to 10%. That’s not bad, for market research.

The problem is that they can bring people into a feedback loop, which is why every other organization I know of does random sampling. But as Consumer Reports writers harped on particular issues, people who never noticed a flaw might suddenly discover it—which is one reason, by the way, why car companies really hated the idea of making service bulletins public. Once people get the idea they might have a problem, they want it fixed, even if they don’t really have it. In this case, CR’s writing sensitizes people to possible problems. Remember that four-speed Chrysler automatic? Once you think it’s a bad transmission, every screwed-up shift—and every transmission sometimes “guesses wrong”—becomes a fault that gets reported back on the survey form.

This effect is more powerful because volunteers often have a high need for approval (according to a good amount of research), and Consumer Reports is not shy about giving out demand characteristics; social desirability effects therefore should have a pretty strong impact on what readers say. People actually change their perceptions to match what they think an interviewer wants. It’s a good reason for seasoning the magazine’s usual reader-based surveys with some random input from people outside their little feedback loop.

To quote from Raymond DeGennaro II, in that allpar story: “They have to prove that their data represents the general public, and they haven’t.” That proof could come just once every ten years, if it turns out that our assumptions are wrong.

One quick anecdote: in one year, the Toyota Corolla and Chevrolet Nova were both made by Toyota in a single California plant; the sole differences were the radio and the intake manifold (the Chevy used Toyota’s Japanese design, while the Toyota used a special one for the United States and Canada). There was a large spread in ratings between these nearly identical cars, made by the same company, in the same plant, with almost all the same parts.

3) Not defining what they want

Consumer Reports’ surveys, at least the last ones I saw, asked people to report only serious problems. They did not define “serious” in the survey form. That reinforces the feedback loop in #2; buyers of favored cars can match their reality to the desired result by redefining problems as “not serious,” while buyers of “troublesome” cars can redefine minor problems as “serious.”

As a side note, there is an interaction here with the vehicle brand. Some companies specify rather onerous and frequent maintenance steps, while others do not. My old article had old examples, from the 1980s and early 1990s, where “maintenance” was covering up serious problems in Toyota and Nissan cars.

4) Bias

Despite Consumer Reports’ frequent claims, it is not free from bias—but it is free from advertising.

Advertising was a huge problem for some of the magazines doing car comparisons in the past; yes, it is still a problem for online sites. Yes, CR is definitely more credible than many other comparison sources. But no, they are not free from biases.

It is almost impossible to be free from bias, as any credible researcher knows; the best one can do is to try to report the biases in the discussion section, and let others make up their own minds.

We could go into the various forms that bias takes, but some were quite obvious when looking at individual car comparisons. For many years, under a director whose previous job was at Chrysler, any review of a Dodge, Chrysler, Plymouth, or (in later years) Jeep focused on every possible drawback; the magazine reviewed the Plymouth-Dodge Neon three times in one year, each review getting worse, at one point referring to its “anemic” engine which was far more powerful than any other standard compact-car powerplant of the day. In one 2005 comparison, including the Volvo S40, Acura TSX, and two other cars, they mentioned the Volvo’s poor rear legroom five times, though on the next page, they admitted it had the same or more legroom than the other cars. The Acura had “good” gas mileage while the Volvo’s was merely “acceptable,” though they were the same (and, to boot, the Volvo took regular while the Acura took premium).

Consumers themselves bring their own personal biases, too. It is almost impossible to be free from bias, as any researcher knows; the best one can do is to air them openly in the discussion section, create awareness, and give readers a degree of discretion.

There is always a bias, and proclaiming that none exists goes beyond facile; and provides further evidence of problem #1.

General issues with reliability surveys

Here, we have an embarrassment of riches, despite the best efforts of many dedicated and honest people.

Not enough information to judge differences

Every measurement has some error. There are statistics that can be used to see if a difference between two groups is due to chance and measurement errors, or is more likely an actual underlying difference. One of those is the standard deviation; another is the number of cases in the sample (e.g. people reporting on their impressions of the Chrysler Pacifica minivan). It’s very hard to tell whether there is a significant difference between rankings in CR’s reports. Is there a significant difference between a white dot and a half-red dot? Between a full black dot and a white dot? Between the best and worst? We can’t tell. We don’t know the variance for any particular model, or the average variance, for that matter; we don’t even know how many people reported in on any particular model. In the past, the magazine actually published movie reviews (as judged by their readers with a monthly survey), even for movies with fewer than five responses.

CR is hardly alone in this. Few ratings groups report this information publicly, so it is impossible to tell whether a mildly above average car (or whatever) is really better than a mildly below average one.

Not telling us what goes wrong

WhatCar? once had just about the perfect setup for predicting the quality of cars and trucks, unfortunately restricted to the United Kingdom. Taken from actual repair records of leased cars, it provided some specifics the editors could use to figure out if a problem had been fixed. If one particular issue afflicted a particular car, they could (and did) contact the manufacturer to find out if it was fixed, and if so, when. That would have helped, say, the Chrysler Cirrus launch; one piece of trim was made incorrectly (a supplier had changed the plastic type), and 100% of early Cirrus cars had to have it replaced. That gained it a big black dot in CR, the lowest rating, though the rest of the car was fine, and any car that was likely to be on the lots by the time buyers got that issue would not have the same problem.

WhatCar? would tell you about that—CR generally does not. Nor do J.D. Power or any of the others. To a degree, you could glean it from TrueDelta, which opened a few years back to counter CR’s in-built problems—but no longer tries to do reliability ratings, due to the amount of work it required.

Lumping cars together

Would you expect a 702-horse 4×4 truck to have the same reliability as a base V6 pickup with less than half the power and rear wheel drive? That’s what happens if you don’t split the Ram 1500 series up. Different powertrains and trim levels could yield very different results, but to keep sample sizes rational, they are usually lumped together into one. CR actually went so far as to bring corporate siblings together, pooling their data, which conveniently covers up anomalies in their results; on the lighter side, it is defensible since it also makes their numbers less randomly variable (more cases, less random fluctuation). Sometimes they took it too far—as did other outlets—for example, bringing the short and long wheelbase Caravan and Voyager into one big clump. To a degree that is unavoidable, especially with slow sellers; but Caravan and Voyager have at times had extremely high sales.

Splitting hairs

At times, J.D. Power has made the standard deviation part of their chart; usually, though, it is not included, and there are no error bars. What’s more, the chart’s axis does not start at zero; it starts at roughly the lowest score and goes to the highest, which increases the space between bars—and may suggest real differences in quality, when scores are within the statistical margin of error. Indeed, simply having rankings rather than groups of automakers emphasizes the differences between brands or models. Consumer Reports has a five-point scale, but doesn’t tell us if there are significant differences between, say, half-black and half-red (and in some years they have also done rankings).

Bar charts which show exact ratings are nice in that they provide more information, but without knowing the margin of error, you really can’t tell whether there’s an actual difference. That can be the same with just five ratings. People make big decisions based on these differences, and sometimes they might just be splitting hairs.

Survey-related problems

Every survey-based system has problems with people who do not reply to the surveys. Some go through great lengths to sample the nonrespondents and compare their results to those who did send surveys in. Some don’t. It’s quite possible those who do not respond to surveys will have different results. The only way to tell is to devote a good deal of time and effort to testing the numbers now and then.

Surveys bring other issues, too. People may not remember what went wrong, and there are, again, feedback issues; if you own a car that’s reputed to be ultra-reliable, you may actually be more forgiving when something went wrong. When you get to the three-year ownership point, remembering all the repairs on a particular car may be hard.

There’s even the question, which is almost impossible to avoid, of figuring out what was actually fixed—whether it was maintenance or a flaw, whether it was a user-induced problem (e.g. hitting a curb or an immense pothole), and whether you basically had the same problem with five or six repairs because the dealer had lousy performance. These issues are very hard to root out with surveys.

TrueDelta tried by doing monthly surveys and asking whether the problem was solved, and whether it was part of an earlier problem. They also went deeper and asked what the specific problems were, so they could figure out if the issue with a car was just one thing, which may have been eventually fixed by the automaker, making it a safer buy. However, they’re not around today. WhatCar? was even more clever, getting data directly from leasing companies throughout Britain, so they had an extremely large sample, representative of cars people actually bought (CR tends to be light on some vehicles, the ones CR tends to never recommend); and their very specific information revealed when “multiple problems” were actually the same problem, not fixed the first time by the dealer or mechanic. WhatCar? is also out of that business, but their model was probably as close to perfection as we can ever get.

Maintenance differences

This is no longer as large an issue as it used to be, but periodic maintenance requirements also feed into “reliability.” Honda used to have extremely high maintenance demands, including adjusting solid lifters and repacking the front wheel bearings every year or two. Chrysler, on the other hand, tended to have much looser maintenance demands. If the car is constantly going into the dealer for owner-pays costs, is that less of a problem than a squeak or a rattle?

More to the point, the constant maintenance—and different maintenance—given to some cars can affect their reliability over time. A 1997 Detroit News article noted that Honda owners even cleaned their garage floors regularly, more often than buyers of American car brands. One would think such meticulous habits would also extend to changing the oil and other fluids according to the schedule, filling tires to the right pressure as needed, and not neglecting anything, which should yield a more reliable car. Likewise, buyers of certain cars can be expected to have lower reliability because their car is “pushed” more—sporty cars, performance sedans, offroad vehicles, work trucks, delivery vans. Does anyone control for this kind of systematic use error in their surveys? As far as I know, nobody does; nor would I want to, in their place. It does make it much easier for the tamer, more sedate cars to do well.

How do you measure quality?

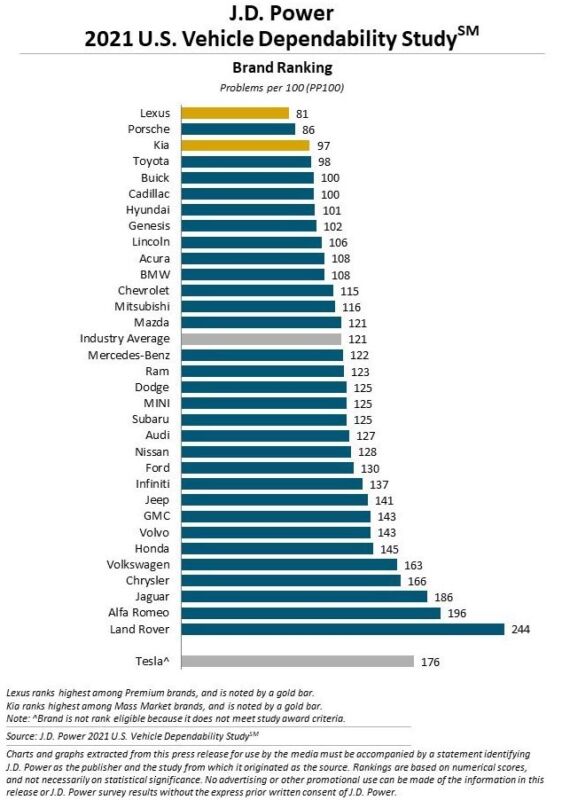

J.D. Power collects survey data at two time periods; the 90-day survey (the customer has owned their vehicle for just three months) is said to measure “initial quality” (IQS), while the three-year one measures “dependability” (a word, ironically, invented by a Dodge Brothers marketing man). IQS intends to measure the quality coming out of the assembly plant, as well as the dealer preparation of the vehicle; VDS seeks to measure the quality of the vehicle throughout the customer’s ownership.

Take a second look at the IQS’ goal. A brand like Mercedes, which does a great deal of at-plant inspection and whose dealers have time and money to reinspect the car when it arrives, is likely to do much better than a company whose actual production quality may be better, but doesn’t do much inspection of particular cars.

Is quality really a matter of “fewer unscheduled dealer trips,” or, as CR sometimes implied in its reviews, gaps between dashboard elements and poorly trimmed upholstery? Is it doing what the vehicle is suppose to do, predictably and well? Is it durability, making it to 200,000 miles with minimal work? Is it higher quality to have a vehicle with high maintenance costs and few breakdowns, or one with low maintenance costs and a few more breakdowns? There’s no hard and fast rule for quality. Even durability is up for grabs—does durability mean taking 6,000 pound payloads in your pickup over a bunch of logs every day, or is it driving your Corolla for 200,000 miles with very few repairs?

There are some problems we are just not likely to resolve any time soon. However, that doesn’t mean we should stop trying.

Where do you go from here?

Where do you go from here? Mainly, keep your eyes open and be aware of the drawbacks of the various providers of ratings. Consumer Reports is still generally more reliable than the comments section of Car & Driver or some random blog, especially one like reallygoodcarreviews-dot-com or some such. (Or greatlaserprinterreviews-dot-com for that matter.)

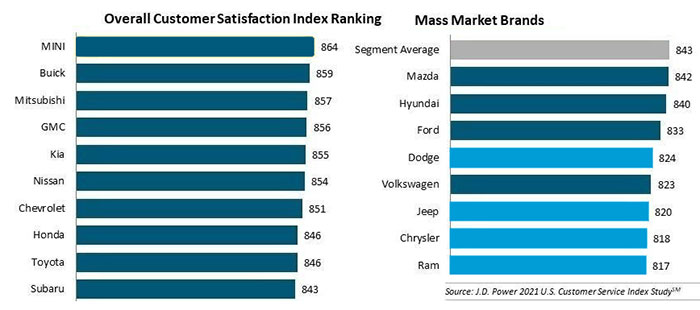

However, if you are agonizing over whether to buy the car you really like because it has just average reliability, you can probably rest assured that it’s not significantly different from one a few lines up in the chart. The extremes are where you may be concerned, in any of these reviews. Those who wind up at the very bottom of J.D. Power are probably not your safest choice. Keep things in perspective, though; if Chevrolet is rated at 115 problems/100 cars in J.D. Power and Dodge is rated at 125, that just means you’re only slightly more likely to have a problem in your Dodge during the first 3 years (see above). On the other hand, I’d take the numbers for the real outliers seriously; if you ask me whether I’d feel safer buying a Lexus IS or Alfa Romeo Giulia, the answer is Lexus, all the way. But an Avalon vs a Charger? With Toyota at 98 and Dodge at 125, that’s roughly one problem vs one and a quarter problems, over three years; the Charger is looking fine.

Discover more from Stellpower - that Mopar news site

Subscribe to get the latest posts sent to your email.